The painful gap between seeing a problem and describing it

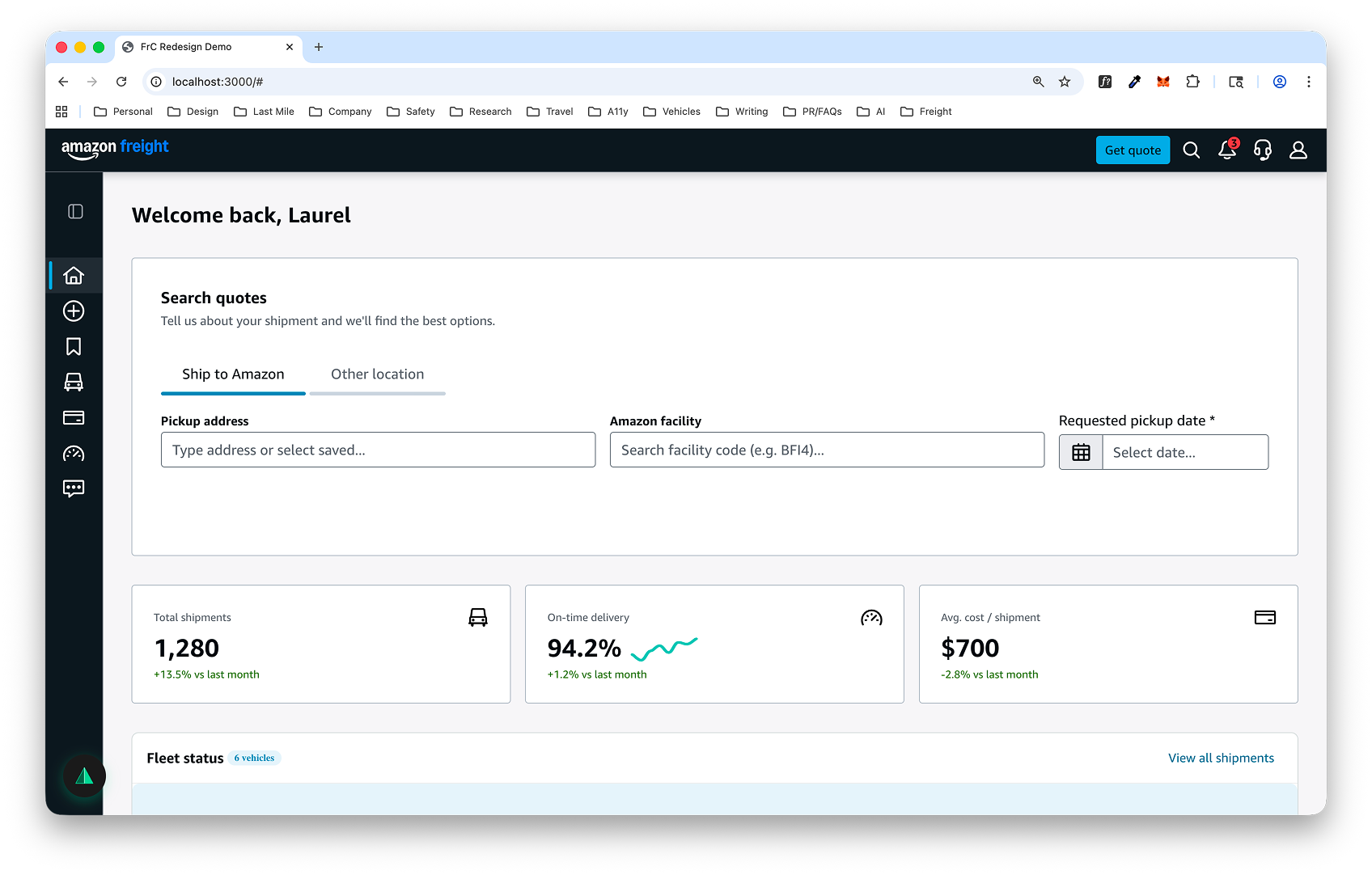

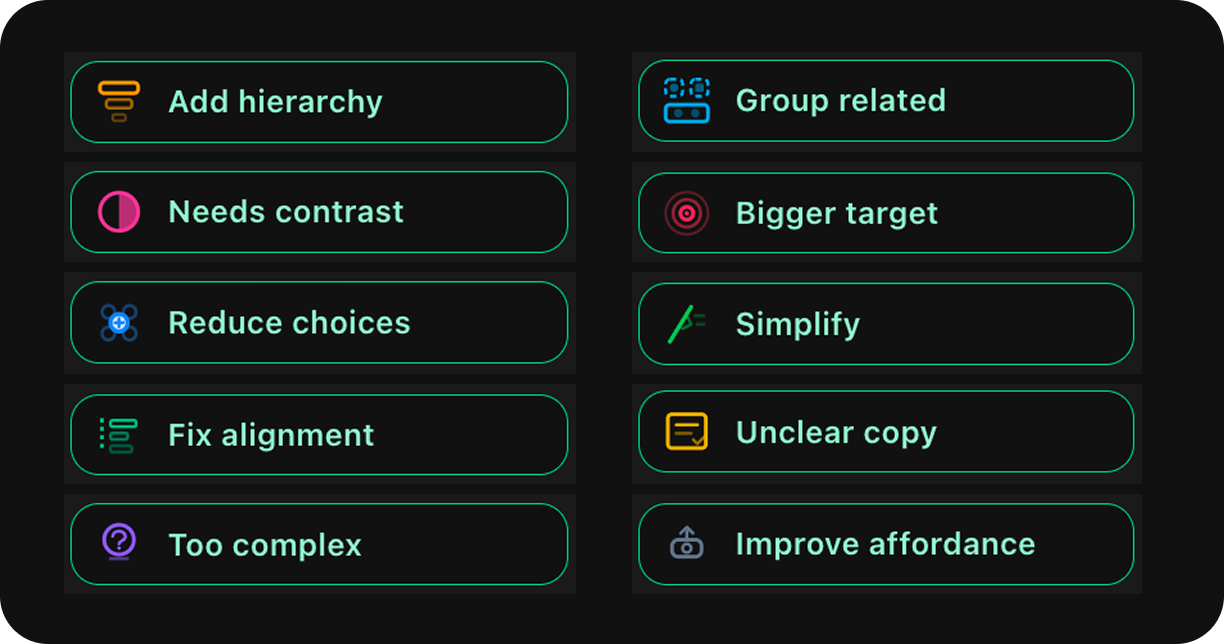

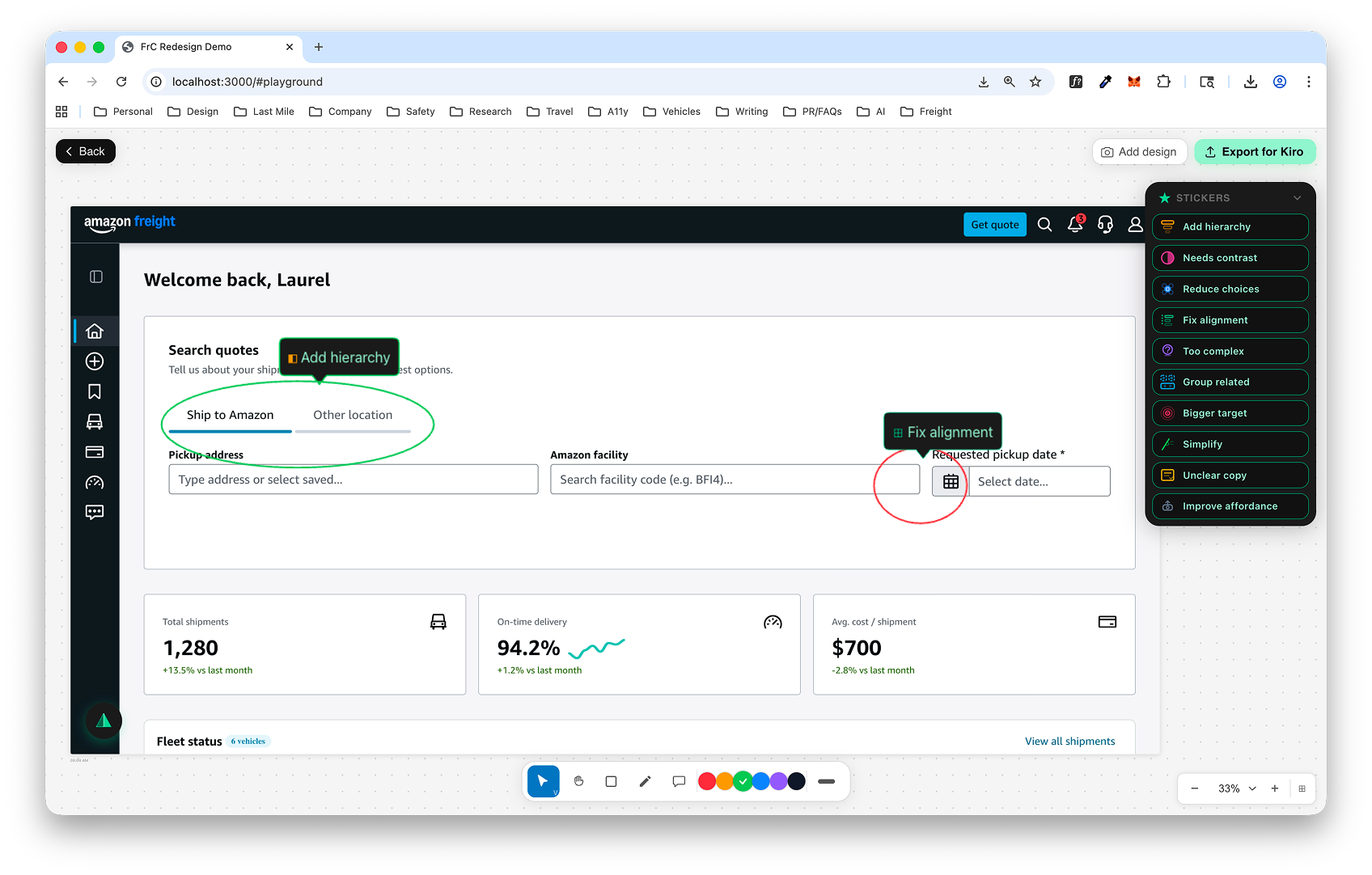

AI-powered design tools are changing how teams build interfaces, but they share a fundamental limitation: they rely on written instructions. When a designer spots an alignment issue, a contrast problem, or a hierarchy that's off — they have to translate what they see into words. That translation is slow, imprecise, and loses critical context about exactly where and what needs to change.

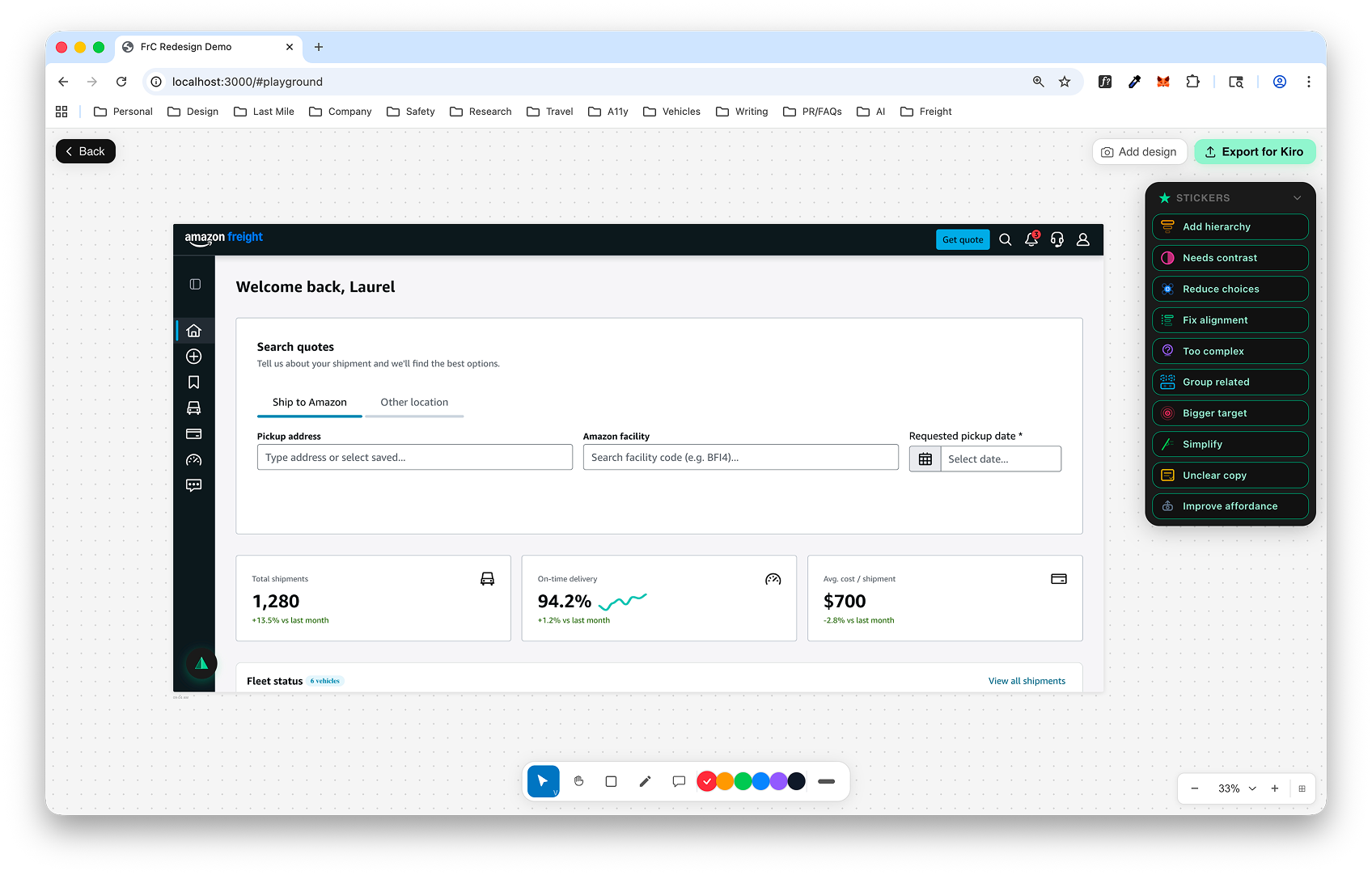

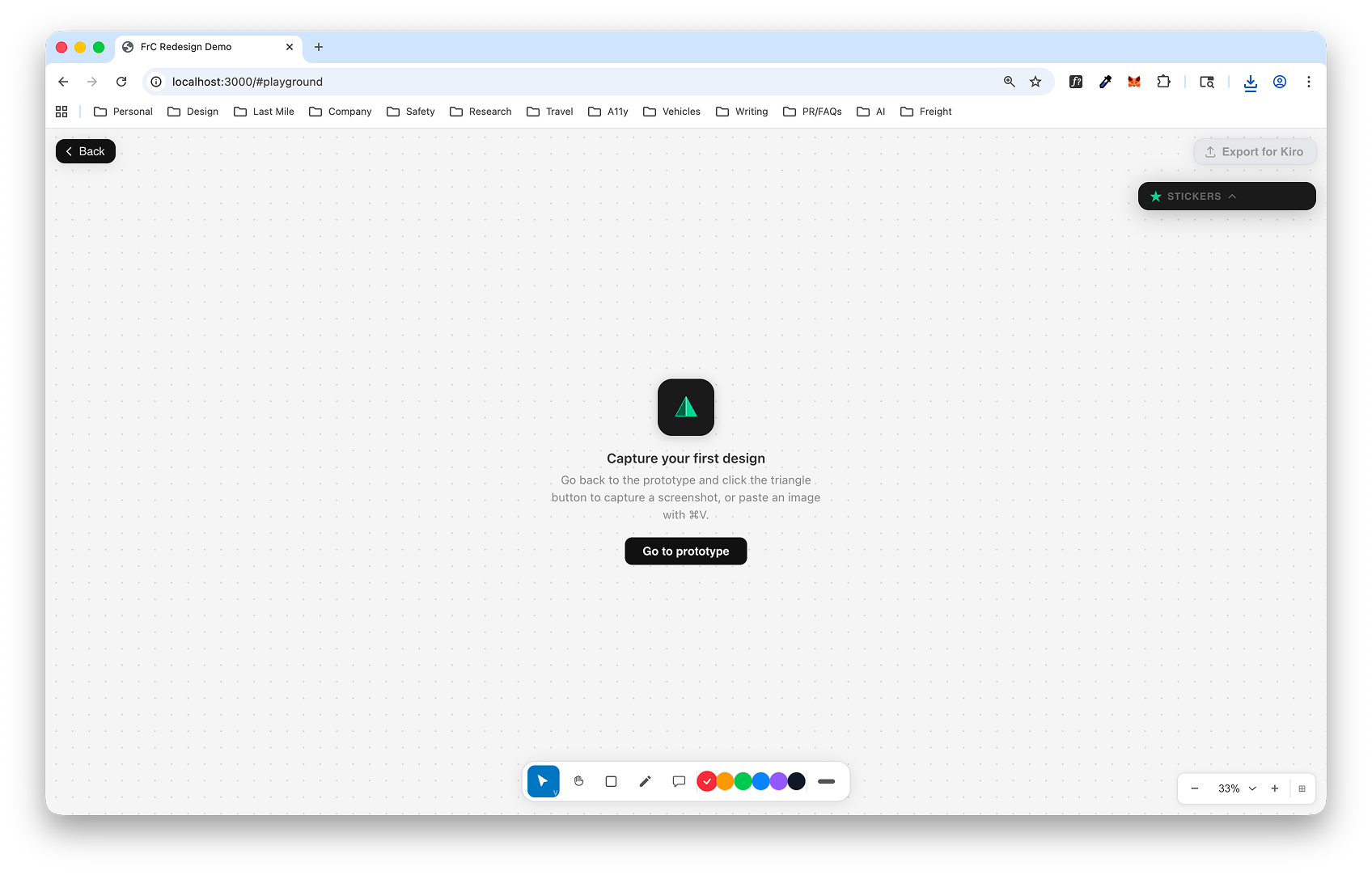

I wanted to build a tool for my design community at Amazon that closes this gap — something that lets designers point directly at the problem and communicate it visually, with the precision that a marked-up screenshot provides over a paragraph of text.